diff options

| author | 2025-07-22 18:00:27 +0200 | |

|---|---|---|

| committer | 2025-07-22 18:00:27 +0200 | |

| commit | c00cad2cebcb8136a998f6f7ba2c27672f785d10 (patch) | |

| tree | 863516d8459713cc4b91c83d8aeeef3cac486b39 /vendor/github.com/grafana/regexp | |

| parent | [chore/deps] Upgrade to go-sqlite 0.27.1 (#4334) (diff) | |

| download | gotosocial-c00cad2cebcb8136a998f6f7ba2c27672f785d10.tar.xz | |

[chore] bump dependencies (#4339)

- github.com/KimMachineGun/automemlimit v0.7.4

- github.com/miekg/dns v1.1.67

- github.com/minio/minio-go/v7 v7.0.95

- github.com/spf13/pflag v1.0.7

- github.com/tdewolff/minify/v2 v2.23.9

- github.com/uptrace/bun v1.2.15

- github.com/uptrace/bun/dialect/pgdialect v1.2.15

- github.com/uptrace/bun/dialect/sqlitedialect v1.2.15

- github.com/uptrace/bun/extra/bunotel v1.2.15

- golang.org/x/image v0.29.0

- golang.org/x/net v0.42.0

Reviewed-on: https://codeberg.org/superseriousbusiness/gotosocial/pulls/4339

Co-authored-by: kim <grufwub@gmail.com>

Co-committed-by: kim <grufwub@gmail.com>

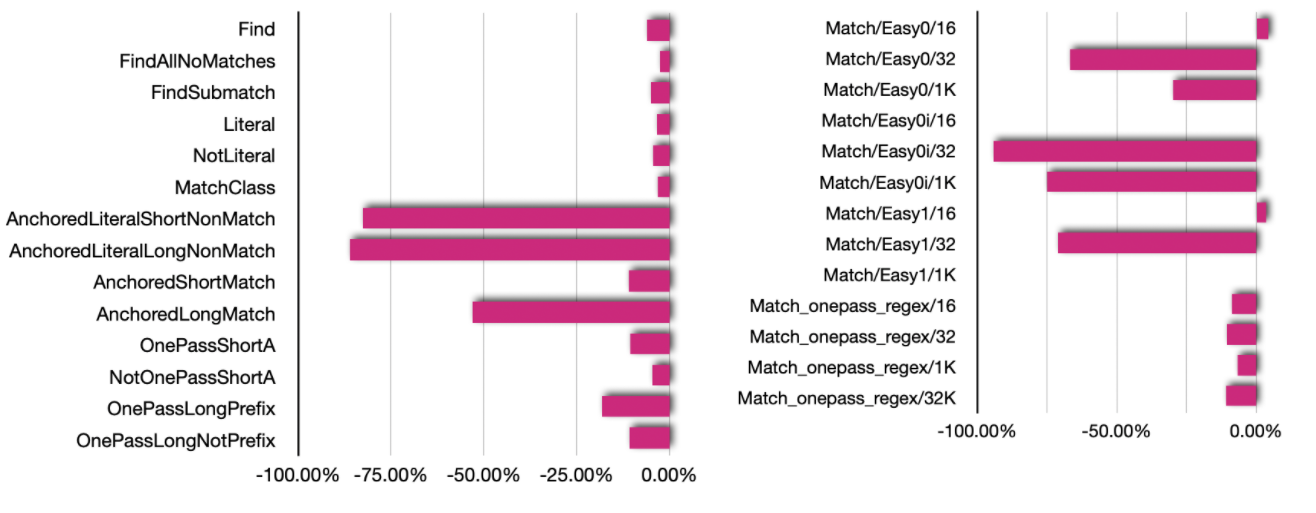

Diffstat (limited to 'vendor/github.com/grafana/regexp')

| -rw-r--r-- | vendor/github.com/grafana/regexp/.gitignore | 15 | ||||

| -rw-r--r-- | vendor/github.com/grafana/regexp/LICENSE | 27 | ||||

| -rw-r--r-- | vendor/github.com/grafana/regexp/README.md | 12 | ||||

| -rw-r--r-- | vendor/github.com/grafana/regexp/backtrack.go | 365 | ||||

| -rw-r--r-- | vendor/github.com/grafana/regexp/exec.go | 554 | ||||

| -rw-r--r-- | vendor/github.com/grafana/regexp/onepass.go | 500 | ||||

| -rw-r--r-- | vendor/github.com/grafana/regexp/regexp.go | 1304 |

7 files changed, 2777 insertions, 0 deletions